AI in Fintech Branding: How to Build Trust When Buyers Fear Risk, Compliance and Hype

Founder / Creative Director

@13:00, 04.03.2026

AI is now a core requirement for remaining competitive in financial services. The most common question FS organisations ask of AI is “can we trust it?”

AI trust is hard to earn, not due to dislike of automation, but because the stakes are high. Financial services buyers face real risks: regulatory exposure, reputational damage, operational disruption, model risk, and career risk from unpredictable outcomes.

Money and hallucinations don’t mix.

When fintech brands lead with “AI-powered” headlines, many buyers respond with caution rather than excitement.

Successful brands are not those with bold AI claims but those that make risk manageable, explainability standard, and adoption safe.

This is a branding problem, not just a product problem.

Buyers assess not only the tool’s capabilities but also whether choosing you is a safe decision for their organisation.

Why AI branding fails in fintech

AI messaging in fintech tends to fall into three traps:

1. Hype language undermines credibility

“Revolutionary”, “game-changing”, “next-gen”, “disruptive”, “transformational”.

In finance, such language often signals that a product is unproven.

2. Feature-first messaging forces the buyer to translate

If buyers first see model types, architectures, or capability lists, they must interpret complex information.

Interpretation creates friction. Friction increases perceived risk.

Any claim that sounds like a guarantee (accuracy, prevention, prediction, security) raises questions:

- How do you know?

- Under what conditions?

- What are the failure modes?

- What happens when it’s wrong?

In regulated environments, ambiguity is not marketing; it is a liability.

The core shift: sell assurance, not intelligence

In fintech, the real value of AI is rarely “smartness”.

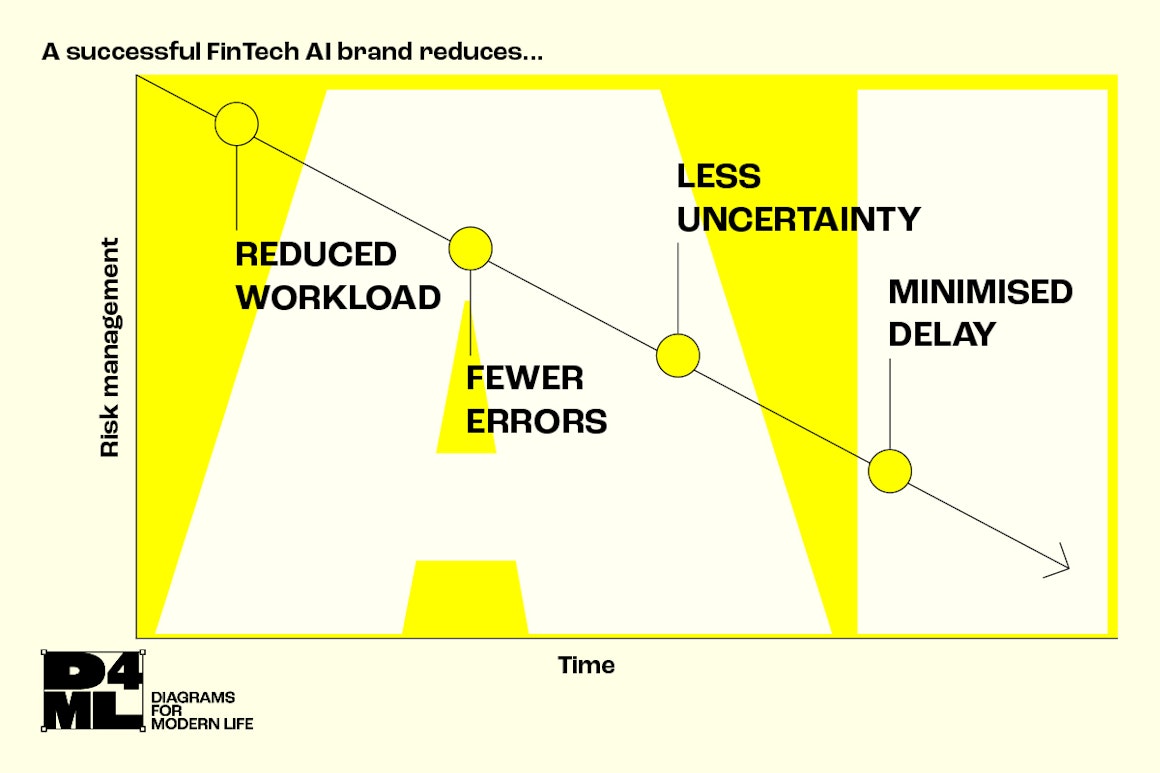

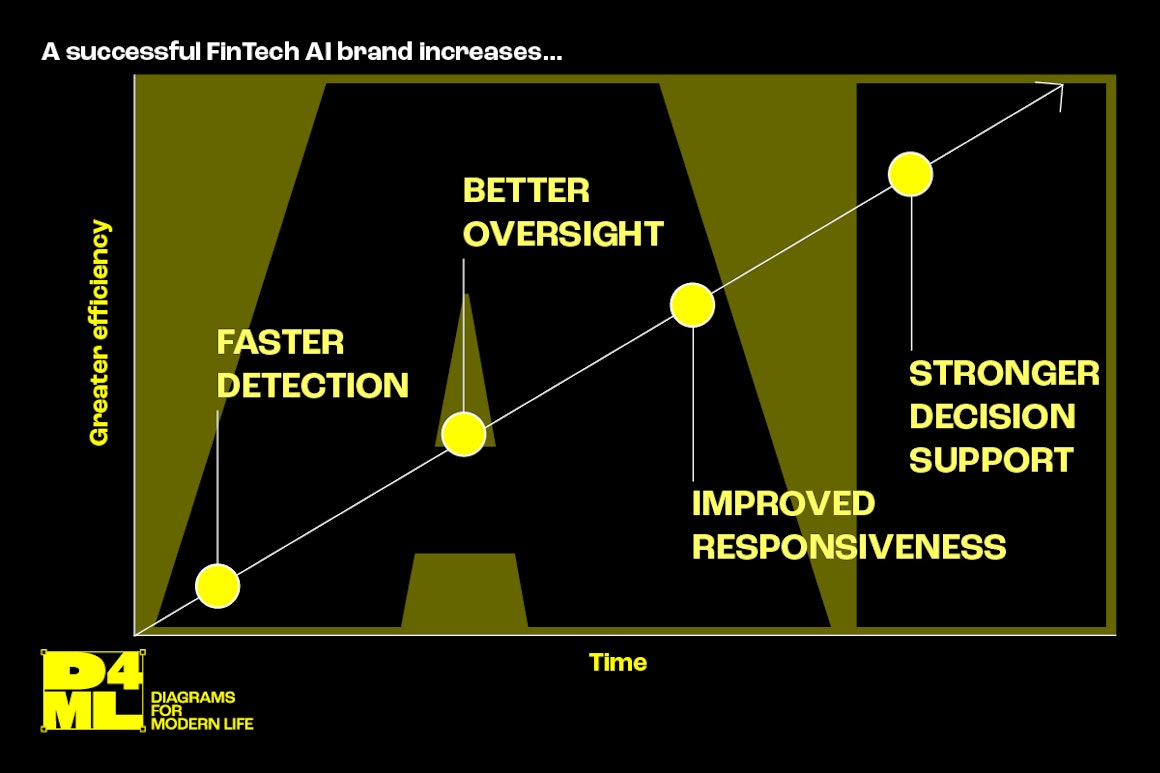

It means reduced workload, faster detection, fewer errors, better oversight, improved responsiveness, and stronger decision support. The value is less risk and less drag.

So your brand must be built around assurance.

This does not mean being boring but being specific, measurable, and grounded in the buyer’s realities.

The trust signals fintech buyers actually notice

Here are the credibility levers that consistently reduce fear, especially when AI is involved.

1. Risk framing before capability

Buyers begin not with “what can it do?” but with “what could go wrong?”

Openly addressing risk immediately differentiates your brand from most competitors.

Practical examples of risk-framing statements:

- “Designed for auditability and oversight, not black-box automation.”

- “Built to support decisions, with clear escalation paths.”

- “Model outputs are explainable and traceable by default.”

- “Human-in-the-loop is not an add-on - it’s the operating model.”

Effective website example:

Include a brief “How we manage risk” section near the top of core pages, not buried in compliance documents.

2. A clear stance on human control

Much fintech AI fear stems from job and accountability risks.

Even if the product is excellent, the buyer is thinking: Who owns the decision when the model suggests the wrong thing?

Your brand should make it clear that:

- AI supports, it doesn’t replace accountability

- Outputs can be challenged.

- There is oversight, review, and escalation

This makes adoption feel operationally realistic rather than idealised.

3. Explainability that is normal, not defensive

“Explainable AI” gets used as a buzzword. Instead, show what it means in practice:

- What the user sees

- What gets logged

- What can be exported

- How decisions can be defended in audits

Simple, credible phrasing beats jargon:

“Every recommendation includes a reason trail and can be exported for review.”

4. Proof that mirrors the buyer’s constraints

The strongest proof is not big logos but relevance.

Fintech buyers want to see:

- Similar regulatory environments

- Similar workflows

- Similar data sensitivities

- Similar security expectations

- Similar failure consequences

If you can’t publish detailed case studies, you can still publish credible proof:

- Anonymised outcomes (time saved, reduction in manual checks, faster case resolution)

- Redacted screenshots of outputs and audit logs

- Workflow diagrams that show how AI fits into oversight

- “What we won’t do” boundaries

5. Use specific language on accuracy and limitations

Overclaiming is one of the fastest ways to lose trust.

Instead of claiming “high accuracy”, credible AI fintech brands talk about:

- Confidence levels

- Thresholds

- Monitoring

- Drift detection

- Review processes

- What happens when the system is uncertain

A brief paragraph acknowledging limitations signals trust and demonstrates maturity.

6. Make security and compliance visible product principles

Many brands hide security and compliance details in footers, which buyers view as red flags.

You don’t need to publish sensitive details. You do need to show that security is designed in, not bolted on.

What to make visible:

- Where data is processed and stored (at a high level)

- Access controls and role-based permissions

- Audit trails and logging

- Data retention options

- Deployment options (where relevant)

In other words, these elements reduce procurement friction.

7. Develop a point of view that feels earned

To stand out in AI fintech, you need a distinctive, authentic belief.

Examples of credible points of view:

- “The goal isn’t automation. It’s fewer preventable errors.”

- “AI should make compliance teams faster, not more exposed.”

- “The best models are the ones you can explain to a regulator.”

- “AI is only valuable when it fits the operating model.”

A strong point of view gives your brand a spine. It makes your content quotable. It makes your narrative sticky.

How to structure your messaging so trust lands fast

A simple trust-first hierarchy for fintech AI pages:

1. Outcome headline: what changes in the buyer’s world

2. Assurance line: how risk is managed (oversight, auditability, constraints)

3. Proof: relevant examples, numbers, artefacts

4. How it works: capability in plain language

5. Compliance and security: visible, skimmable

6. FAQ: answer the feared questions directly

This order matters. If you start with features, many buyers won’t reach the trust-building sections.

Common buyer fears you should answer explicitly

A strong fintech AI brand doesn’t avoid these. It meets them.

Add an FAQ section that addresses questions like:

- What happens when the model is wrong?

- How do you prevent hallucinations or false positives?

- Can we audit how a decision was made?

- Where does data go, and who can access it?

- Can we control what the model can and cannot do?

- How do you handle model drift and monitoring?

- How do we roll this out safely without disrupting teams?

Clear answers achieve two outcomes:

- Increase conversion by reducing uncertainty

- Increase chances of citation in AI-driven search by providing structured, extractable content

Trust is not a claim. It’s an operating model.

The biggest branding mistake in fintech AI is assuming trust comes from polished language.

It doesn’t.

Trust comes from showing that you understand the buyer’s risk landscape and have designed the product, process, and story around it.

If your brand can make a cautious buyer think:

This feels safe. This feels controllable. This feels defensible.

You’re no longer competing on who has the smartest AI.

You’re competing on who makes AI easiest to adopt in the real world.